With the upcoming enforcement of the Cyber Resilience Act in the European Union, the entire software industry is currently looking to get their vulnerability management under control. By 2027 companies will be legally required to continuously identify and report known vulnerabilities in their software components within very strict timeframes. This makes manual tracking of dependencies unfeasible and requires automated Vulnerability Management Systems. At my work at {metæffekt} GmbH I spend most of my time developing automated systems that attempt to map components from a Software Bill of Materials (SBOM) to vulnerability databases like the National Vulnerability Database (NVD). This mapping process was the subject of my Bachelor Thesis at the Technische Hochschule Mannheim.

The underlying problem is that identifying software is messy in reality. When a vulnerability is published it is usually assigned a CVE (Vulnerability) identifier and linked to a specific software configuration via a Common Product Enumeration (CPE) string. In a perfect world every software artifact would map cleanly to exactly one CPE. In reality the CPE dictionary is filled with typos, duplicate entries from different researchers trying to claim credit, inconsistent naming schemes, and unclear relationships to the projects they try to describe. For example the Cyrus SASL library is registered under its official name, but also under various university acronyms and aliases with different underscore placements. Because the automated systems can not reliably manage this chaos on their own, our team currently maintains a big manual "correlation" dataset to correct false positive and false negative mappings.

- affects:

- Id: "snappy-*"

ignores:

- Id: "snappy-*.jar"

append:

Inapplicable CPE URIs: "cpe:/a:knplabs:snappy"

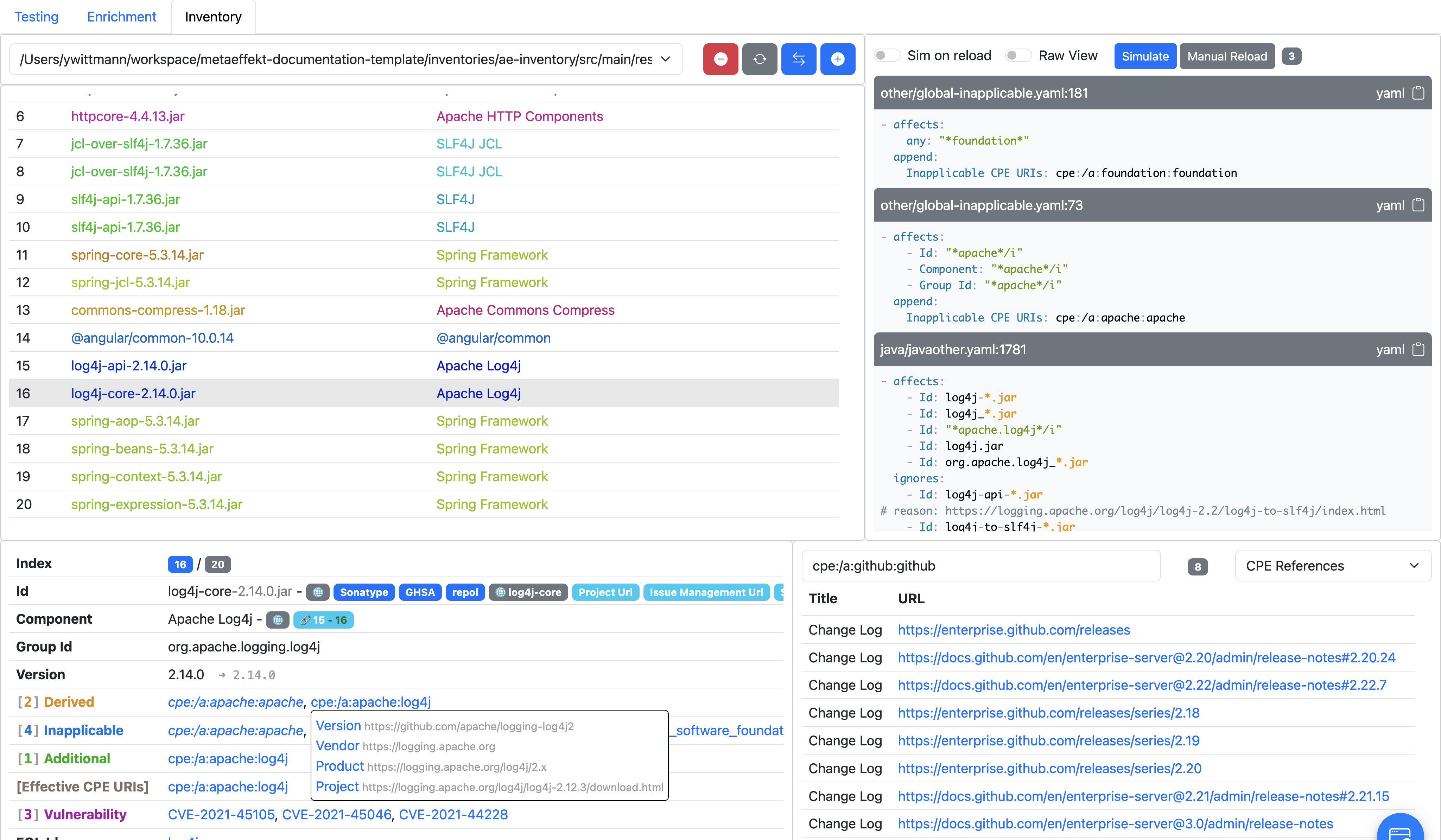

Additional CPE URIs: "cpe:/a:google:snappy"This correlation dataset is essentially a set of YAML files that currently has a total of 30,000 lines. It operates sequentially by defining a selector for a specific artifact and then appending or removing CPE strings from it. While this worked fine for a few years, it reached a point where maintaining it was becoming more time-consuming.

The main issue was that the sequential nature of the file created implicit dependencies. If you wanted to map the identifier redis to the database server, you would write a rule for it. But if the artifact actually referred to the redis Python client, you had to write a second rule further down the file that specifically matched the Python environment, explicitly removed the database CPE, and added the Python CPE. If anyone accidentally changed the order of these files or entries, the entire logic would break silently.

An internal tool called the "Correlation Utilities" was used to assist our team in maintaining the entries.

The requirements collection and concept phase of my thesis over half of the time available, so towards the end the timings got quite tight. The concept I landed on was to solve this by moving away from linear text replacements and instead modeling the relationships as a directed graph. The core concept of my thesis was to strictly separate the conceptual Product from its technical Representation. A Product is a logical entity, like the Apache Log4j core library. A Representation is how this product appears in various external ecosystems, like a CPE string, a Package URL, or a Microsoft Product Id. By treating both Products and Representations as distinct nodes in a graph, we can define explicit semantic edges between them.

I define three primary types of edges for this model.

The is edge represents a positive attribution, meaning a representation fully describes the product.

The is not edge handles the explicit exclusions, allowing us to proactively prevent false identifications without relying on parsing order.

Finally, the inherit edge allows nodes to share baseline attributes.

Whenever a software artifact needs to be evaluated, the system traverses this graph starting from the artifact node, aggregates all transformations and metadata along the path, and resolves the correct representations.

This way, you can model semantic relationships between product "bubbles".

Implementing this graph engine came with some specific technical constraints. Because it had to integrate into the existing {metæffekt} infrastructure, the codebase was written in Java 8. Furthermore, the resulting correlation dataset needed to be shipped to customer environments without requiring them to maintain dedicated database servers like MongoDB or external Lucene instances, so I decided to use SQLite as the persistence layer. To maintain flexibility for future node attributes without constantly migrating the database schema, I used SQLite JSON extraction features to store the highly variable node properties as serialized objects while keeping the core routing attributes as standard columns.

To stress-test this new system, I picked Microsoft Windows 10 as a product to create a sub-graph for. Microsoft products are notoriously difficult to map because their vulnerability-related product identifiers are unique numeric identifiers that differ across versions, builds, and system architectures like 32-bit and 64-bit. In the old YAML format this required duplicating the same base attributes over and over again for every single patch level. In the graph model I was able to build a hierarchy. A base Windows 10 node defines the general properties, specific version nodes inherit from it and apply a transformation rule to the version strings, and architecture nodes sit at the very bottom. The graph resolution algorithm automatically traverses up the inheritance chain and picks the most specific node available.

Of course introducing a graph traversal algorithm into a system that previously just read sequential text files impacts performance. I ran benchmarks comparing the legacy system against the new SQLite graph implementation using synthetic inventories of up to 6000 artifacts. The graph system is roughly 1.8 times slower than the old system. However the scaling remains perfectly linear, and the absolute processing time for a massive software inventory is still well within the acceptable limits for a background pipeline. Considering the massive gains in data integrity, the elimination of redundant entries, and the ability to machine read the reasoning behind every single correlation decision, this performance trade off is completely acceptable.

Submitting a 101-page document and successfully defending it in the colloquium was a huge relief. While there are definitely still areas for improvement, like building an interactive visualization tool for the nodes or migrating the entire legacy dataset over to the new schema, the theoretical and technical foundation is fully functional. I am very grateful to everyone supporting me through this process.